Un nouveau service Kubernetes managé vient de sortir : Clever Kubernetes Engine, CKE pour les intimes.

Dans cet article, nous allons regarder comment installer un cluster Kubernetes avec ce service et s’il est compatible avec Cilium.

Installation de CKE (et de Cilium ?)#

Qu’est-ce que la CKE ?#

Clever Cloud est un cloud provider français. Il vient de sortir un tout nouveau service en beta : un Kubernetes managé.

Son installation#

Pré-requis pour avoir ce Kubernetes :

- un compte Clever Cloud

- la cli clever (j’ai galéré sur mon mac amd64 donc je l’ai installé sur ma VM Linux)

Il faut déjà s’authentifier :

clever loginOn va te donner un lien pour que la cli puisse accéder à l’API de clever avec ton compte. Très classique.

Il faut ensuite activer cette fonctionnalité :

clever features enable k8sVous allez voir afficher un mini tutorial :

Experimental feature 'k8s' enabled

- Create a Kubernetes cluster:

clever k8s create my-cluster

clever k8s create my-cluster --org myOrg

- List Kubernetes clusters:

clever k8s list

- Get details about a Kubernetes cluster:

clever k8s get my-cluster

- Get kubeconfig file for a Kubernetes cluster:

clever k8s get-kubeconfig my-cluster

clever k8s get-kubeconfig my-cluster > ~/.kube/config

- Activate persistent storage on a Kubernetes cluster:

clever k8s add-persistent-storage my-cluster

- Delete a Kubernetes cluster:

clever k8s delete my-cluster

Learn more about Clever Kubernetes: https://www.clever.cloud/developers/doc/kubernetes/Bon faut trouver un nom à ce cluster expérimental ? Comment va-t-on l’appeler ?

clever k8s create cilium

🚀 Cluster cilium (kubernetes_01KQ8866Y7VAC3EBHPPHPP7XH3) is being deployed

You can get its information with clever k8s get kubernetes_01KQ8866Y7VAC3EBHPPHPP7XH3Et voilà le cluster est créé !

Pour accéder à l’API de Kubernetes, il suffit donc de taper :

clever k8s get-kubeconfig cilium > ~/.kube/configOn peut alors taper :

kubectl get nodeL’API fonctionne mais ça ne répond rien car il n’y a pas encore de nœuds !

Il suffit alors de créer un fichier YAML :

kind: NodeGroup

metadata:

name: example-nodegroup

spec:

flavor: M

nodeCount: 2kubectl apply -f nodegroup.yamlEn moins de 30 secondes les noeuds sont alors prèts :

kubectl get node

NAME STATUS ROLES AGE VERSION

example-nodegroup-node0 Ready <none> 25s v1.35.4

example-nodegroup-node1 Ready <none> 24s v1.35.4Installation de Cilium#

Maintenant qu’on a installé le cluster, on va pouvoir installer Cilium ! Youhou ma partie préférée ! Ah ben non il est installé par défaut :

kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system cc-collector-mr4md 0/1 Running 0 38s

kube-system cc-collector-mrf8w 0/1 Running 0 38s

kube-system cilium-657px 1/1 Running 0 39s

kube-system cilium-kllhq 1/1 Running 0 38s

kube-system cilium-operator-99f84df59-fsggs 1/1 Running 0 9m12s

kube-system coredns-7597f4b8ff-69f82 1/1 Running 0 9m12s

kube-system konnectivity-agent-cnhwv 1/1 Running 0 37s

kube-system konnectivity-agent-lkgsh 1/1 Running 0 39s

kube-system kube-state-metrics-6d88d8556c-r4v57 1/2 Running 0 9m9s

kube-system kubelet-csr-approver-68c5944785-86559 1/1 Running 0 9m12s

kube-system metrics-server-c69c7d87d-k94ml 0/1 Running 0 9m10sObservation du Cilium par Clever#

Les Pods#

Première bonne surprise déjà, kube-proxy n’est pas installé donc Cilium est donc en mode kube-proxy replacement.

Quelques choses de plus surprenants, les pods Envoy de Cilium ne sont pas présents. À quoi sert Envoy dans Cilium ? Il sert principalement de s’occuper des Cilium Network Policy de niveau 7 (genre on accepte uniquement les GET dans une requête HTTP). À l’origine envoy était intégré au pod de Cilium ce qui était un anti-pattern. Depuis pas mal de version, envoy a été externalisé dans un DaemonSet par défaut. Ainsi la version Cilium de Clever revient aux origines.

Dernières chose : Hubble n’a pas été installé. Pour rappel, Hubble est un peu le tcpdump de Kubernetes, ça permet de voir tous les flux réseaux.

Les CRD#

Regardons maintenant les CRDs :

kubectl get crd

NAME CREATED AT

ciliumcidrgroups.cilium.io 2026-04-27T20:05:30Z

ciliumclusterwidenetworkpolicies.cilium.io 2026-04-27T20:05:29Z

ciliumendpoints.cilium.io 2026-04-27T20:05:24Z

ciliumidentities.cilium.io 2026-04-27T20:05:22Z

ciliuml2announcementpolicies.cilium.io 2026-04-27T20:05:32Z

ciliumloadbalancerippools.cilium.io 2026-04-27T20:05:31Z

ciliumnetworkpolicies.cilium.io 2026-04-27T20:05:27Z

ciliumnodeconfigs.cilium.io 2026-04-27T20:05:33Z

ciliumnodes.cilium.io 2026-04-27T20:05:25Z

ciliumpodippools.cilium.io 2026-04-27T20:05:23Z

nodegroups.api.clever-cloud.com 2026-04-27T19:56:42ZRien à signaler. Il y a bien des cilium network policies et des cilium cluster wide network policies.

La configuration#

On peut voir la configuration avec la commande suivante :

kubectl get cm -n kube-system cilium-config -o yaml

apiVersion: v1

data:

bpf-lb-map-max: "65536"

bpf-map-dynamic-size-ratio: "0.0025"

bpf-policy-map-max: "16384"

cluster-name: default

cluster-pool-ipv4-cidr: 172.16.0.0/13

cluster-pool-ipv4-mask-size: "24"

cni-exclusive: "true"

cni-log-file: /var/run/cilium/cilium-cni.log

debug: "false"

enable-bpf-masquerade: "true"

enable-endpoint-health-checking: "true"

enable-health-checking: "true"

enable-hubble: "false"

enable-ipv4: "true"

enable-ipv6: "false"

enable-l7-proxy: "false"

enable-operator-leader-election: "true"

install-iptables-rules: "true"

ipam: cluster-pool

k8s-service-host: kubernetes-apiserver_01KQ8866Y7VAC3EBHPPHPP7XH3.m.ng_eae2f39d-aad2-45d1-8a15-50f5aabeb2c4.cc-ng.cloud

k8s-service-port: "8080"

kube-proxy-replacement: "true"

monitor-aggregation: medium

monitor-aggregation-interval: 5s

tunnel-protocol: vxlan

write-cni-conf-when-ready: /host/etc/cni/net.d/05-cilium.conflist

kind: ConfigMap

metadata:

creationTimestamp: "2026-04-27T19:56:38Z"

labels:

app.kubernetes.io/name: cilium-config

app.kubernetes.io/part-of: cilium

k8s-app: cilium

name: cilium-config

namespace: kube-system

resourceVersion: "205"

uid: 5caf7563-a377-4f53-a694-2610ae791665Ça confirme que Cilium est sans Hubble et en mode sans kube-proxy. On voit d’ailleurs l’API du Kube dans l’option k8s-service-host.

On voit l’option enable-l7-proxy qui est à false. Donc il n’y a pas de Network policy de niveau 7.

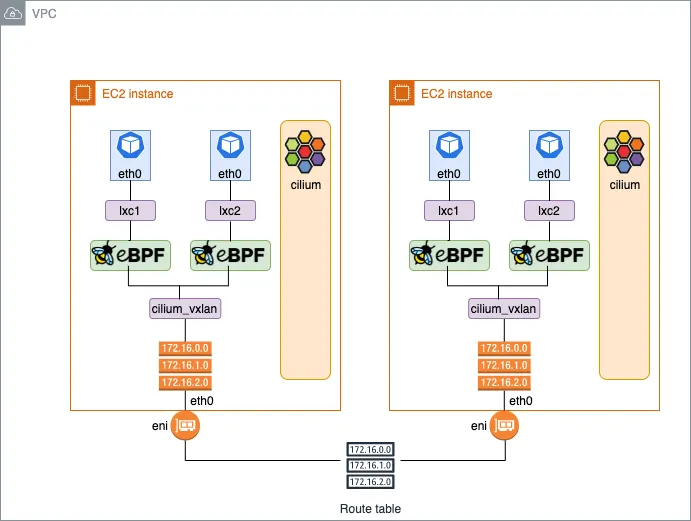

Je vois l’option ipam à cluster-pool : c’est Cilium qui s’occupe la gestion des IPs pour le pod. C’est l’option par défaut (voir la documentation)

Enfin l’option tunnel-protocol est à vxlan. Ça détermine la communication des pods entre les nœuds du cluster. Ça aurait été plus efficace en routage natif.

Regardons maintenant la Cilium CA :

kubectl get secret -n kube-system

NAME TYPE DATA AGE

cc-collector-otlp-credentials Opaque 2 23mAh ben non elle n’existe pas. Le cilium CA sert à chiffrer via du mtls la communication entre les pods Cilium.

Pour plus de détails sur la configuration, je vous montre une dernière commande :

kubectl exec -it ds/cilium -n kube-system -c cilium-agent -- cilium status --verbose

KVStore: Disabled

Kubernetes: Ok 1.35 (v1.35.4) [linux/amd64]

Kubernetes APIs: ["cilium/v2::CiliumCIDRGroup", "cilium/v2::CiliumClusterwideNetworkPolicy", "cilium/v2::CiliumEndpoint", "cilium/v2::CiliumNetworkPolicy", "cilium/v2::CiliumNode", "core/v1::Pods", "networking.k8s.io/v1::NetworkPolicy"]

KubeProxyReplacement: True [ens3 10.2.216.208 fe80::cccd:2ff:fea8:a8cc, wg-50f5aabeb2c4 10.101.0.6 (Direct Routing)]

Host firewall: Disabled

SRv6: Disabled

CNI Chaining: none

CNI Config file: successfully wrote CNI configuration file to /host/etc/cni/net.d/05-cilium.conflist

Cilium: Ok 1.19.1 (v1.19.1-d0d0c879)

NodeMonitor: Disabled

Cilium health daemon: Ok

IPAM: IPv4: 5/254 allocated from 172.16.0.0/24,

Allocated addresses:

172.16.0.140 (kube-system/konnectivity-agent-lkgsh)

172.16.0.164 (kube-system/cc-collector-mrf8w)

172.16.0.200 (health)

172.16.0.206 (router)

172.16.0.39 (kube-system/coredns-7597f4b8ff-69f82)

IPv4 BIG TCP: Disabled

IPv6 BIG TCP: Disabled

BandwidthManager: Disabled

Routing: Network: Tunnel [vxlan] Host: BPF

Attach Mode: TCX

Device Mode: veth

Masquerading: BPF [ens3, wg-50f5aabeb2c4] 172.16.0.0/24 [IPv4: Enabled, IPv6: Disabled]

Clock Source for BPF: ktime

Controller Status: 29/29 healthy

Name Last success Last error Count Message

cilium-health-ep 36s ago never 0 no error

ct-map-pressure 7s ago never 0 no error

endpoint-153-regeneration-recovery never never 0 no error

endpoint-289-regeneration-recovery never never 0 no error

endpoint-317-regeneration-recovery never never 0 no error

endpoint-3776-regeneration-recovery never never 0 no error

endpoint-480-regeneration-recovery never never 0 no error

endpoint-gc 4m37s ago never 0 no error

endpoint-periodic-regeneration 25s ago never 0 no error

ipcache-inject-labels 38s ago never 0 no error

k8s-heartbeat 25s ago never 0 no error

local-identity-checkpoint 4m37s ago never 0 no error

resolve-identity-153 4m37s ago never 0 no error

resolve-identity-289 4m36s ago never 0 no error

resolve-identity-317 4m37s ago never 0 no error

resolve-identity-3776 4m28s ago never 0 no error

resolve-identity-480 4m37s ago never 0 no error

resolve-labels-kube-system/cc-collector-mrf8w 4m28s ago never 0 no error

resolve-labels-kube-system/coredns-7597f4b8ff-69f82 4m37s ago never 0 no error

resolve-labels-kube-system/konnectivity-agent-lkgsh 4m37s ago never 0 no error

sync-policymap-153 4m33s ago never 0 no error

sync-policymap-289 4m33s ago never 0 no error

sync-policymap-317 4m33s ago never 0 no error

sync-policymap-3776 4m27s ago never 0 no error

sync-policymap-480 4m33s ago never 0 no error

sync-to-k8s-ciliumendpoint (153) 7s ago never 0 no error

sync-to-k8s-ciliumendpoint (317) 7s ago never 0 no error

sync-to-k8s-ciliumendpoint (3776) 8s ago never 0 no error

write-cni-file 4m40s ago never 0 no error

Proxy Status: OK, ip 172.16.0.206, 0 redirects active on ports 10000-20000, Envoy: embedded

Global Identity Range: min 256, max 65535

Hubble: Disabled Metrics: Disabled

KubeProxyReplacement Details:

Status: True

Socket LB: Enabled

Socket LB Tracing: Enabled

Socket LB Coverage: Full

Devices: ens3 10.2.216.208 fe80::cccd:2ff:fea8:a8cc, wg-50f5aabeb2c4 10.101.0.6 (Direct Routing)

Mode: SNAT

Backend Selection: Random

Session Affinity: Enabled

NAT46/64 Support: Disabled

XDP Acceleration: Disabled

Services:

- ClusterIP: Enabled

- NodePort: Enabled (Range: 30000-32767)

- LoadBalancer: Enabled

- externalIPs: Enabled

- HostPort: Enabled

Annotations:

- service.cilium.io/node

- service.cilium.io/node-selector

- service.cilium.io/proxy-delegation

- service.cilium.io/src-ranges-policy

- service.cilium.io/type

BPF Maps: dynamic sizing: on (ratio: 0.002500)

Name Size

Auth 524288

Non-TCP connection tracking 71811

TCP connection tracking 143622

Endpoints 65535

IP cache 512000

IPv4 masquerading agent 16384

IPv6 masquerading agent 16384

IPv4 fragmentation 8192

IPv4 service 65536

IPv6 service 65536

IPv4 service backend 65536

IPv6 service backend 65536

IPv4 service reverse NAT 65536

IPv6 service reverse NAT 65536

Metrics 1024

Ratelimit metrics 64

NAT 143622

Neighbor table 143622

Endpoint policy 16384

Policy stats 65530

Session affinity 65536

Sock reverse NAT 71811

Encryption: Disabled

Cluster health: 2/2 reachable (2026-04-27T20:08:54Z) (Probe interval: 1m36.566274746s)

Name IP Node Endpoints

example-nodegroup-node0 (localhost):

Host connectivity to 10.101.0.6:

ICMP to stack: OK, RTT=91.091µs (Last probed: 2026-04-27T20:07:18Z)

HTTP to agent: OK, RTT=202.812µs (Last probed: 2026-04-27T20:07:18Z)

Endpoint connectivity to 172.16.0.200:

ICMP to stack: OK, RTT=158.001µs (Last probed: 2026-04-27T20:07:42Z)

HTTP to agent: OK, RTT=413.873µs (Last probed: 2026-04-27T20:07:42Z)

example-nodegroup-node1:

Host connectivity to 10.101.0.7:

ICMP to stack: OK, RTT=1.003107ms (Last probed: 2026-04-27T20:09:18Z)

HTTP to agent: OK, RTT=1.247591ms (Last probed: 2026-04-27T20:09:18Z)

Endpoint connectivity to 172.16.1.191:

ICMP to stack: OK, RTT=1.074644ms (Last probed: 2026-04-27T20:08:54Z)

HTTP to agent: OK, RTT=1.303905ms (Last probed: 2026-04-27T20:08:54Z)

Modules Health: agent

├── controlplane

│ ├── agent-infra-endpoints

│ │ └── job-init-host-endpoint [STOPPED] Start initialization (4m37s, x1)

│ ├── bgp-control-plane

│ │ ├── observer-job-default-gateway-route-change-tracker [OK] OK (720ns) [44] (4m28s, x1)

│ │ └── observer-job-device-change-device-change-tracker [OK] OK (1.77µs) [25] (4m28s, x1)

│ ├── cgroup-manager

│ │ └── job-process-pod-events [OK] Running (4m40s, x1)

│ ├── cilium-agent-dynamic-config

│ │ └── job-k8s-reflector-cilium-configs-cm-cilium-config-kube-system [OK] 26 upserted, 0 deleted, 26 total objects (4m38s, x1)

│ ├── cilium-health

│ │ └── job-init [STOPPED] Start initialization (4m37s, x1)

│ ├── ciliumenvoyconfig

│ │ ├── job-node-labels [OK] Running (4m38s, x1)

│ │ ├── job-reconcile [OK] OK, 0 object(s) (4m38s, x2)

│ │ └── job-refresh [OK] Next refresh in 30m0s (4m38s, x1)

│ ├── clustermesh

│ │ └── job-clustermesh-nodemanager-notifier [STOPPED] Running (4m38s, x1)

│ ├── config-drift-checker

│ │ └── job-drift-checker [OK] Running (4m38s, x1)

│ ├── daemonconfig

│ │ └── timer-job-validate-unchanged-daemon-config [OK] OK (69.071µs) (36s, x1)

│ ├── dynamic-lifecycle-manager

│ │ ├── job-reconcile [OK] OK, 0 object(s) (4m38s, x3)

│ │ └── job-refresh [OK] Next refresh in 30m0s (4m38s, x1)

│ ├── enabled-features

│ │ └── job-update-config-metric [STOPPED] Waiting for agent config (4m38s, x1)

│ ├── endpoint-api

│ │ ├── job-cni-deletion-queue [STOPPED] Running (4m40s, x1)

│ │ └── job-unlock-lockfile [STOPPED] Running (4m38s, x1)

│ ├── endpoint-manager

│ │ ├── cilium-endpoint-153 (kube-system/konnectivity-agent-lkgsh)

│ │ │ ├── cep-k8s-sync [OK] sync-to-k8s-ciliumendpoint (153) (7s, x29)

│ │ │ ├── datapath-regenerate [OK] Endpoint regeneration successful (25s, x5)

│ │ │ └── policymap-sync [OK] sync-policymap-153 (4m33s, x1)

│ │ ├── cilium-endpoint-289 (/)

│ │ │ ├── datapath-regenerate [OK] Endpoint regeneration successful (25s, x5)

│ │ │ └── policymap-sync [OK] sync-policymap-289 (4m33s, x1)

│ │ ├── cilium-endpoint-317 (kube-system/coredns-7597f4b8ff-69f82)

│ │ │ ├── cep-k8s-sync [OK] sync-to-k8s-ciliumendpoint (317) (7s, x29)

│ │ │ ├── datapath-regenerate [OK] Endpoint regeneration successful (25s, x5)

│ │ │ └── policymap-sync [OK] sync-policymap-317 (4m33s, x1)

│ │ ├── cilium-endpoint-3776 (kube-system/cc-collector-mrf8w)

│ │ │ ├── cep-k8s-sync [OK] sync-to-k8s-ciliumendpoint (3776) (8s, x28)

│ │ │ ├── datapath-regenerate [OK] Endpoint regeneration successful (25s, x3)

│ │ │ └── policymap-sync [OK] sync-policymap-3776 (4m27s, x1)

│ │ ├── cilium-endpoint-480 (/)

│ │ │ ├── datapath-regenerate [OK] Endpoint regeneration successful (25s, x5)

│ │ │ └── policymap-sync [OK] sync-policymap-480 (4m33s, x1)

│ │ ├── endpoint-gc [OK] endpoint-gc (4m37s, x1)

│ │ ├── job-init-periodic-endpoint-controllers [STOPPED] Running (4m40s, x1)

│ │ └── job-namespace-updater [OK] Running (4m38s, x1)

│ ├── endpoint-restore

│ │ └── job-finish-endpoint-restore [STOPPED] Regenerating restored endpoints (4m37s, x1)

│ ├── ep-bpf-prog-watchdog

│ │ ├── job-wait-for-endpoint-restore [STOPPED] Running (4m38s, x1)

│ │ └── timer-job-ep-bpf-prog-watchdog [OK] OK (1.102242ms) (7s, x1)

│ ├── fqdn

│ │ └── namemanager

│ │ ├── job-wait-for-endpoint-restore [STOPPED] OK (4m37s, x1)

│ │ ├── observer-job-preallocate [OK] Primed (4m40s, x1)

│ │ └── timer-job-dns-garbage-collector-job [OK] OK (15.86µs) (37s, x1)

│ ├── hostip-sync

│ │ └── job-sync-hostips [OK] Synchronized (38s, x6)

│ ├── hubble

│ │ ├── job-hubble [STOPPED] Running (4m38s, x1)

│ │ └── timer-job-hubble-namespace-cleanup [OK] Primed (4m38s, x1)

│ ├── ipam

│ │ └── ipam-podippool-table

│ │ └── job-k8s-reflector-podippools-daemon-k8s [OK] 0 upserted, 0 deleted, 0 total objects (4m39s, x1)

│ ├── ipcache

│ │ ├── job-api-server-backend-watcher [OK] Running (4m38s, x1)

│ │ └── job-release-local-identities [STOPPED] Running (4m40s, x1)

│ ├── k8s-tables

│ │ ├── job-k8s-reflector-k8s-namespaces-daemon-k8s [OK] 4 upserted, 0 deleted, 4 total objects (4m40s, x1)

│ │ └── job-k8s-reflector-k8s-pods-daemon-k8s [OK] 1 upserted, 0 deleted, 5 total objects (4m16s, x8)

│ ├── l2-announcer

│ │ └── job-l2-announcer-lease-gc [STOPPED] Running (4m38s, x1)

│ ├── loadbalancer-healthserver

│ │ └── job-control-loop [OK] 0 health servers running (4m16s, x11)

│ ├── loadbalancer-maps

│ │ └── timer-job-pressure-metrics-reporter [OK] Primed (4m38s, x1)

│ ├── loadbalancer-reconciler

│ │ ├── job-reconcile [OK] OK, 5 object(s) (4m16s, x9)

│ │ ├── job-refresh [OK] Next refresh in 30m0s (4m38s, x1)

│ │ ├── job-start-reconciler [STOPPED] Running (4m38s, x1)

│ │ └── socket-termination

│ │ └── job-socket-termination [OK] Running (4m38s, x1)

│ ├── loadbalancer-reflectors

│ │ └── k8s-reflector

│ │ ├── job-reflect-pods [OK] Running (4m38s, x1)

│ │ └── job-reflect-services-endpoints [OK] Running (4m38s, x1)

│ ├── loadbalancer-writer

│ │ └── job-node-addr-reconciler [OK] Running (4m38s, x1)

│ ├── local-node-store

│ │ └── job-sync-local-node [OK] Running (4m40s, x1)

│ ├── nat-stats

│ │ └── timer-job-nat-stats [OK] OK (1.426895ms) (8s, x1)

│ ├── node-manager

│ │ ├── job-backgroundSync [OK] Node validation successful (22s, x4)

│ │ ├── node-checkpoint-writer [OK] node checkpoint written (2m38s, x3)

│ │ ├── nodes-add [OK] Node adds successful (4m38s, x2)

│ │ └── nodes-update [OK] Node updates successful (4m38s, x1)

│ ├── policy

│ │ ├── observer-job-policy-importer [OK] Primed (4m40s, x1)

│ │ └── timer-job-id-alloc-update-policy-maps [OK] OK (67.591µs) (4m28s, x1)

│ ├── stale-endpoint-cleanup

│ │ └── job-endpoint-cleanup [STOPPED] Running (4m38s, x1)

│ ├── status

│ │ └── job-probes [OK] Running (4m38s, x1)

│ └── ztunnel

│ └── enrollment-reconciler

│ └── job-derive-desired-mtls-namespace-enrollments [OK] Running (4m38s, x1)

├── datapath

│ ├── agent-liveness-updater

│ │ └── timer-job-agent-liveness-updater [OK] OK (43.101µs) (0s, x1)

│ ├── iptables

│ │ ├── ipset

│ │ │ ├── job-ipset-init-finalizer [STOPPED] Running (4m40s, x1)

│ │ │ ├── job-reconcile [OK] OK, 0 object(s) (4m40s, x2)

│ │ │ └── job-refresh [OK] Next refresh in 30m0s (4m40s, x1)

│ │ └── job-iptables-reconciliation-loop [OK] iptables rules full reconciliation completed (4m38s, x1)

│ ├── l2-responder

│ │ └── job-l2-responder-reconciler [OK] Running (4m38s, x1)

│ ├── link-cache

│ │ └── timer-job-sync [OK] OK (419.314µs) (8s, x1)

│ ├── loader

│ │ └── job-per-endpoint-route-initializer [STOPPED] Running (4m40s, x1)

│ ├── maps

│ │ ├── bwmap

│ │ │ └── timer-job-pressure-metric-throttle [OK] OK (2.02µs) (8s, x1)

│ │ └── ct-nat-map-gc

│ │ ├── job-enable-gc [STOPPED] Running (4m40s, x1)

│ │ ├── observer-job-nat-map-next4 [OK] Primed (4m40s, x1)

│ │ └── observer-job-nat-map-next6 [OK] Primed (4m40s, x1)

│ ├── maps-cleanup

│ │ └── job-cleanup [STOPPED] Running (4m38s, x1)

│ ├── mtu

│ │ ├── job-endpoint-mtu-updater [OK] Endpoint MTU updated (4m38s, x1)

│ │ └── job-mtu-updater [OK] MTU updated (1420) (4m40s, x1)

│ ├── node-address

│ │ ├── [OK] 172.16.0.206 (primary), fe80::e48b:33ff:fe00:2627 (primary) (4m38s, x1)

│ │ └── job-node-address-update [OK] Running (4m40s, x1)

│ ├── orchestrator

│ │ └── job-reinitialize [OK] OK (4m24s, x2)

│ ├── route-reconciler

│ │ ├── job-desired-route-refresher [OK] Running (4m38s, x1)

│ │ ├── job-reconcile [OK] OK, 0 object(s) (4m36s, x3)

│ │ └── job-refresh [OK] Next refresh in 30m0s (4m40s, x1)

│ ├── sysctl

│ │ ├── job-reconcile [OK] OK, 20 object(s) (4m33s, x11)

│ │ └── job-refresh [OK] Next refresh in 10m0s (4m40s, x1)

│ └── utime

│ └── timer-job-sync-userspace-and-datapath [OK] OK (116.402µs) (38s, x1)

└── infra

├── agent-healthz

│ └── job-agent-healthz-server-ipv4 [OK] Running (4m38s, x1)

├── k8s-synced-crdsync

│ └── job-sync-crds [STOPPED] Running (4m55s, x1)

├── metrics

│ ├── job-collect [OK] Sampled 24 metrics in 1.965021ms, next collection at 2026-04-27 20:10:35.795728186 +0000 UTC m=+317.352167531 (4m38s, x1)

│ └── timer-job-cleanup [OK] Primed (4m38s, x1)

└── shell

└── job-listener [OK] Listening on /var/run/cilium/shell.sock (4m38s, x1)On voit par exemple qu’on a la version 1.19.1 de Cilium (la dernière version est la 1.19.3).

Quelques questions que je me pose. Comment se passe les mises à jour de Cilium ? Si on veut customiser Cilium, que faut il faire sans que l’on remette la configuration par défaut ?

J’espère que ce petit tour d’horizon de CKE sous le prisme Cilium vous a plu :)